Generating 3D Talking Face Landmarks from Speech

|

This project is supported by the National Science Foundation under grant No. 1741472, titled "BIGDATA: F: Audio-Visual Scene Understanding". |

|

What is the problem?

The visual cues from a talker's face and articulators (lips, teeth, tongue) are important for speech comprehension. Trained professionals are able to understand what is being said by purely looking at lip movements (lip reading) [1]. For ordinary people and the hearing impaired population, the presence of visual signals of speech has been shown to significantly improve speech comprehension, even if the visual signals are synthetic [2]. The benefits of adding the visual speech signals are more pronounced when the acoustic signal is degraded, due to background noise, communication channel distortion, and reverberation.

In many scenarios such as telephony, however, speech communication is still acoustical. The absence of the visual modality can be due to the lack of cameras, the limited bandwidth of communication channels, or privacy concerns. One way to improve speech comprehension in these scenarios is to synthesize a talking face from the acoustic speech in real time at the receiver's side. A key challenge of this approach is to make sure that the generated visual signals, especially the lip movements, well coordinate with the acoustic signals, as otherwise more confusions will be introduced.

What is our approach?

More details will be available soon.

Our Results

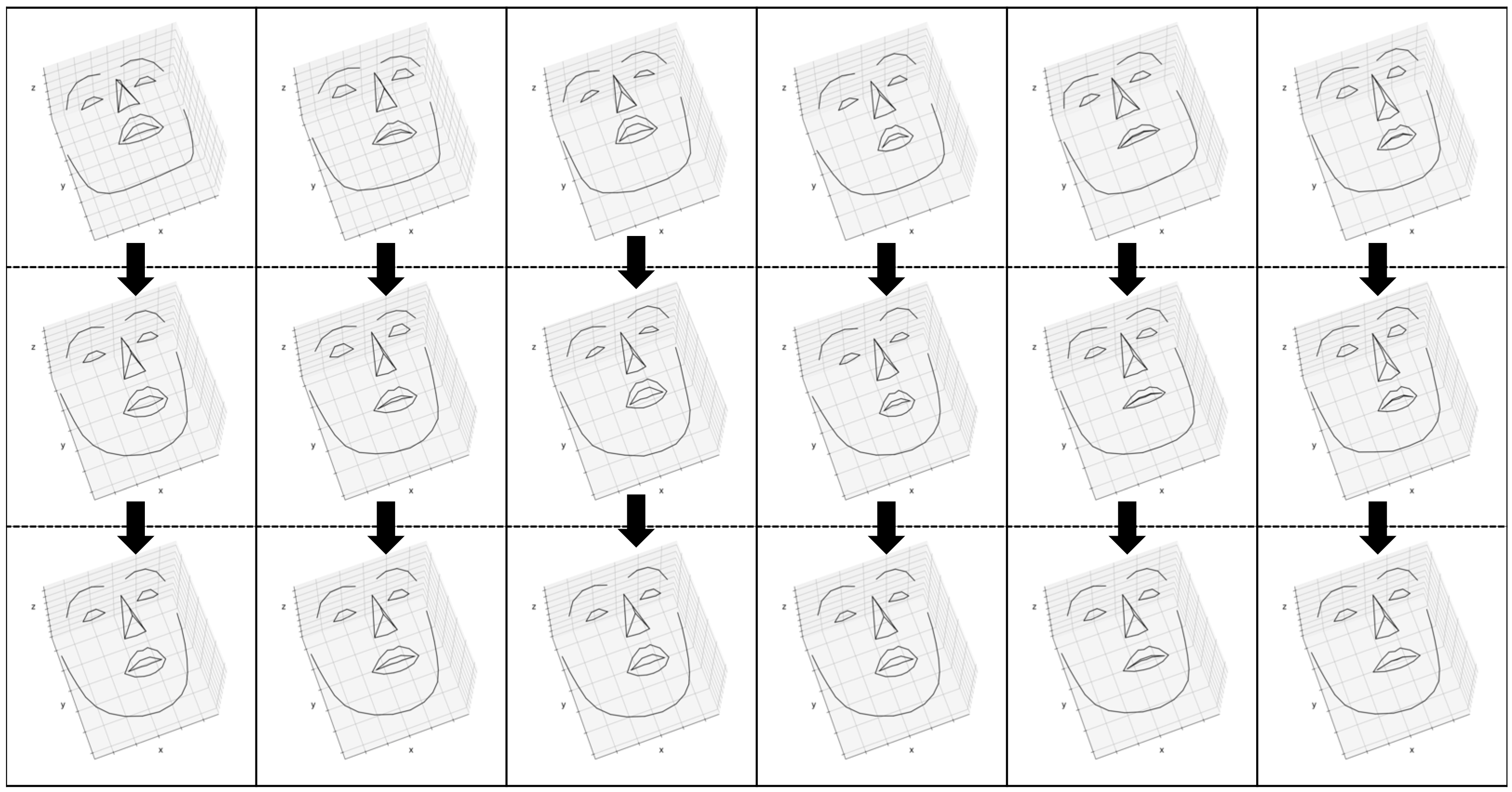

The following examples are generated from unseen speech samples. The left column shows the results for 1D_CNN model, and the right column shows the results for 1D_CNN_TC model.

Timit Samples

1D_CNN

1D_CNN_TC

VCTK Samples

1D_CNN

1D_CNN_TC

Noise Analysis

The following examples are generated from noisy speech. The left column shows the results for 1D_CNN model, and the right column shows the results for noise resilient model, namely 1D_CNN_NR.

9 dB Signal-to-Noise Ration (SNR)

1D_CNN

1D_CNN_NR

Babble Noise

Factory Noise

Speech-Shaped Noise

Motorcycle Noise

Cafeteria Noise

Comparison with Ground-Truth (GT) Landmarks

The following videos contain both ground-truth landmarks (black lines) and the generated landmarks using 1D_CNN model (red_lines). The samples are from STEVI corpus.

Resources

You can download the pre-trained talking face models and offline generation code from here.Publications

Sefik Emre Eskimez, Ross K. Maddox, Chenliang Xu, and Zhiyao Duan, Generating talking face landmarks from speech, in Proc. International Conference on Latent Variable Analysis and Signal Separation (LVA/ICA), 2018.

References

[1] Dodd, Barbara Ed, and Ruth Ed Campbell, “Hearing by eye: The psychology of lip-reading,” The psychology of lip-reading Lawrence Erlbaum Associates, Inc, 1987.

[2] Maddox, Ross K., et al., “Auditory selective attention is enhanced by a task-irrelevant temporally coherent visual stimulus in human listeners,” Elife 4 2015.